During Networking Field Day 21 Aerohive, I mean Extreme, presented on their new “Cloud Driven End to End Enterprise” using ExtremeCloud IQ, formerly HiveManager. After the acquisition of AeroHive by Extreme there had been lots of speculation in the wireless community on what was going to happen with the product. The most obvious conjecture was the reason Extreme made the purchase was for the cloud technology that AeroHive already had, but how would they fold it into the mix with their other offerings?

Abby Strong (@wifi_princess on Twitter) started us off with a quick introduction into The New Extreme and the vision of the company. As Abby started us down the path we got some quick stats around the new technology users in the world, including the 5.1 billion mobile users and USD$2 trillion dollars being spent on digital transformation which was explained more. Digital Transformation is one of the hot marketing buzzwords in the industry at the moment, but what is it exactly? According to Abby, “Digital Transformation is the idea of technology and policy coming together to create a new experience.” This is what Extreme has been focusing on, but how? Extreme is doing this via their Autonomous Network, using automation, insights, infrastructure and an ecosystem all wrapped in machine learning, AI and security.

The concept behind this is using the insights and information Extreme has gathered and looking at issues that arise in the network and being able to recommend if it is a possible driver issue, a recommended code upgrade to fix a network issue and so on. This is a really cool concept around automation and insights which is where most companies are trying to get in the industry and from what was shown at NFD20 in February and then again at NFD21, I think they are almost there with their expanded portfolio of solutions in Applications, Switching, Routing and Wireless and open ecosystem and open source. Check out more on those solutions and more about Extreme at https://www.extremenetworks.com/products/.

Next Extreme brought us into their 3rd generation cloud solution, ExtremeCloud IQ and showing their roadmap towards the 4th generation cloud.

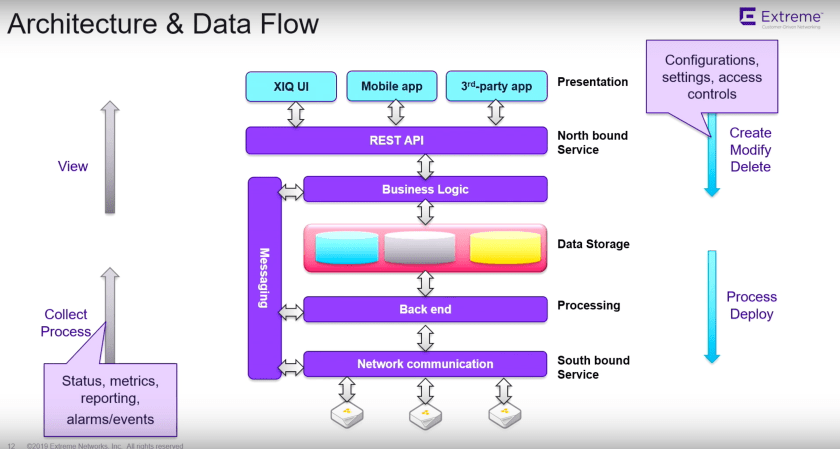

The ExtremeCloud IQ Architecture was presented by Shyam Pullela and Gregor Vučajnk (@GregorVucajnk on Twitter) with a demo of the system.

The architecture is still the previous Aerohive design, however, without ever really digging into the product I was impressed with how they have done the back-end cloud. Currently Extreme is using AWS to host their infrastructure, but we were assured it was not dependent on AWS but could be run on any cloud provider. The setup is interesting as they have multiple regional data centers connecting back to a global data center. This provides resiliency built-in to the system, the ability to run in any country in which a public cloud can run and to collect the analytics and ML/AI data globally and not just from regional areas. With the architecture the ExtremeCloud IQ can also be run in different formats, public cloud, private cloud and on-prem to provide the customers with flexibility. From a basic cloud architecture standpoint, there is nothing crazy or specific Extreme is doing with the setup. The key to how they have done it comes into the scalability that has been designed into the system. Using a simple architecture makes it easy for Extreme to just add compute power to the back-end to scale it for large organizations.

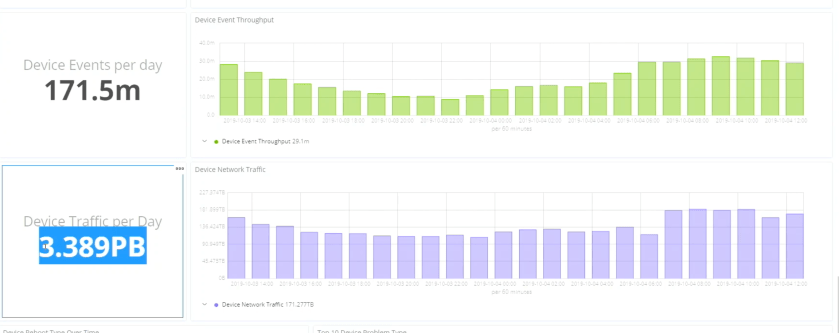

With these regional data centers in use, the ExtremeCloud IQ is processing data to the tune of 3.389 PETABYTES per day and an astounding number of devices and clients to help with the ML/AI decision-making that the infrastructure is handling. These stats were mind-blowing to me and really shows the power of what Extreme has been building, especially around the Aerohive acquisition.

All of this data gets fed into the cloud dashboard as we see with the majority of other vendors. The client analytics is very reminiscent of the dashboards we see from Cisco, Aruba, Mist, etc., there is nothing too different in this regard with the exception of only getting 30 days of data, with no longer options available at this point in time. This is not a major hit against the technology, only that there is no way to correlate data longer than a one month period.

One of the differences that I see in the system is the lower number of false-positive issues that may be flagged by the system. Using the ML that is built into the CloudIQ is the ability to see anomalies and not present them as a possible bad user session. This is something that can cause headaches, especially in a wireless system with users entering and leaving areas with applications running. I will get deeper into these capabilities in an upcoming post.

The team that was on-camera also did not back down from some interesting and hard questions surrounding the roadmaps of the products, where things are and announcements that were made within 24 hours of the presentation being delivered.

All-in-all the solutions and products I am seeing from Extreme and very positive, they seem to have begun the integration of AeroHive nicely and I am excited to see where they go with the big purple cloud.